An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale (Paper Explained)

Вставка

- Опубліковано 5 тра 2024

- #ai #research #transformers

Transformers are Ruining Convolutions. This paper, under review at ICLR, shows that given enough data, a standard Transformer can outperform Convolutional Neural Networks in image recognition tasks, which are classically tasks where CNNs excel. In this Video, I explain the architecture of the Vision Transformer (ViT), the reason why it works better and rant about why double-bline peer review is broken.

OUTLINE:

0:00 - Introduction

0:30 - Double-Blind Review is Broken

5:20 - Overview

6:55 - Transformers for Images

10:40 - Vision Transformer Architecture

16:30 - Experimental Results

18:45 - What does the Model Learn?

21:00 - Why Transformers are Ruining Everything

27:45 - Inductive Biases in Transformers

29:05 - Conclusion & Comments

Paper (Under Review): openreview.net/forum?id=YicbF...

Arxiv version: arxiv.org/abs/2010.11929

BiT Paper: arxiv.org/pdf/1912.11370.pdf

ImageNet-ReaL Paper: arxiv.org/abs/2006.07159

My Video on BiT (Big Transfer): • Big Transfer (BiT): Ge...

My Video on Transformers: • Attention Is All You Need

My Video on BERT: • BERT: Pre-training of ...

My Video on ResNets: • [Classic] Deep Residua...

Abstract: While the Transformer architecture has become the de-facto standard for natural language processing tasks, its applications to computer vision remain limited. In vision, attention is either applied in conjunction with convolutional networks, or used to replace certain components of convolutional networks while keeping their overall structure in place. We show that this reliance on CNNs is not necessary and a pure transformer can perform very well on image classification tasks when applied directly to sequences of image patches. When pre-trained on large amounts of data and transferred to multiple recognition benchmarks (ImageNet, CIFAR-100, VTAB, etc), Vision Transformer attains excellent results compared to state-of-the-art convolutional networks while requiring substantially fewer computational resources to train.

Authors: Anonymous / Under Review

Errata:

- Patches are not flattened, but vectorized

Links:

UA-cam: / yannickilcher

Twitter: / ykilcher

Discord: / discord

BitChute: www.bitchute.com/channel/yann...

Minds: www.minds.com/ykilcher

Parler: parler.com/profile/YannicKilcher

LinkedIn: / yannic-kilcher-488534136

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: www.subscribestar.com/yannick...

Patreon: / yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n - Наука та технологія

Holy cow, these videos are so valuable. This view that Transformers are generalization of MLPs, while CNNs and LSTMs are specializations of it is brilliant. Thank you.

Even the number of TPUs that they have used is enough to guess where this paper comes from.

Lol yeah xD

haha, yea

Too much is set in stone too soon. Generally very poor scientific method in deep learning. You don't even need GPUs for large scale deep learning with distributed simple evolution training algorithms like Comtinuous Gray Code Optimization. With say 256 raspberry pi 4s you get 1024 CPU cores for $15000 and 4.5 KWH. With a training set of 1 million inages each core gets less than 1000 each which it need to return a cost for, for each sparse mutation list. The main metric is DRAM memory bandwidth per unit hardware cost. This is especially the case with Fast Transform fixed-filter-bank neural networks.

It must be from Faceboogle

haha, then I think this paper break the rule of double blind review

I watched this video at the beginning of my ML Masters journey 2 years ago and it all felt like a foreign language. Today I'm watching it again and I’m proud to say that I understand a lot of the things discussed. Whoever is reading this, keep grinding!

I just had the exact same experience.

Welcome to the Matrix. Smith will visit you shortly with your medal.

I just started my masters and am feeling the same you felt. Hope it gets easier

Transformers still have inductive biases ( no, I am not talking about the residual connections ). Like for example the softmaxes used to determine similarities between the elements or the fact that we still use multiple layers which still talks about hierarchical structure of sorts. But they have weaker bias in the sense that their biases look much more abstract at the world. A CNN bias says this is how images looks like. Transformers say this is how you can describe how a large set of unique stuff interact. And before CNNs, Wavelet transformers were saying this is how edges in an image look like. The biases are still there they have just become much more abstract as we learned that we can learn anything if we applied the right training tricks and datasets. That said residual connections are very abstract concept and for that I do not think we are touching them for a while. They essentially say look at the big picture first and give me your decision, then look again but this time closer and try revising your decision. That is a very efficient computational model we as human beings employ on daily basis. So are transformers by the way.

Are biases always a bad thing?

@@Samarpanrai94 I don't think they are, but inductive bias is intrinsic to machine learning. It's presumably present in animals as well.

@@Samarpanrai94 depends on where you work, if you are developing these models in the real world, biases can be amazing if they're correct. In an application you want to help a model as much as possible. The idea is, that if you have enough data, these biases will be present in the dataset and a learnt bias is better than an assumed one. That's why research pushes for that front. In the limited data regime though, no, they're definitely not necessarily bad

I really love your intuition on the residual connections!

I'm slowly starting to grasp more and more what the heck is going on in these videos!! I've been watching your paper reviews for some time now, and I think this is the first time I actually get everything you're saying in a video.

That feeling is great. Thank you for your work!

You have a talent of making a complicated idea simple ans digestable yet keeping its technical fundamentals understandable.

I love the insights you give into the concepts presented in the paper. I very much like the last 2-3 minutes ranking NN architectures by bias

The comments about the biases of these different type of architecture really helps me gain better understanding of these things, thank you!

This is so helpful for an undergrad like me. Yannic explains topics in easy understandable terms. Thank you. Love from INDIA.

This was the best video on transformers I've seen on YT, still in 2022. I think Yannic may be one of the only people that is actually able to productively reason about the core dynamics, effectiveness and utility of ML model designs.

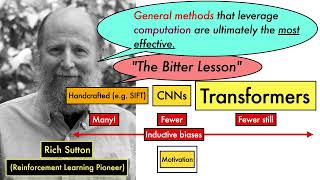

The Transformer architecture is a nice example of Sutton's "Bitter Lesson".

The "bitter lesson" bases success on increased compute, which was made possible entirely by more space and energy efficient hardware. Computes success comes from miniaturization, not the other way around.

The irony :)

¡Hey! Saludos desde Cali, admiro tu trabajo en la comunidad Machine Learning Colombia.

It all comes back to kNN

I appreciate the jests :D great to have you pumping out videos again. Thanks for another banger :)

Great video. I love your intro about the anonymous paper. Thank you for sharing. Well done!

Excellent explanation for visual transformers. The only thing that can be concluded is that most large companies will offer their large models as a service through the API. It is simply very difficult to train such a large model with small capacities.

The generalization/specialization discussion was extremely helpful. Amazing content

Yannic, I absolutely love your reviews :D so energetic! thanks for your work man

Double blind review as it is currently done is based on a purely voluntary work done by reviewers, ACs, etc and is based on the mutual trust that i) reviewers respect the idea of double blind that is necessary to reduce the bias of paper evaluations, as well as ii) authors write their papers in an anonymous way. There are of course exceptions when this is broken, intentional or unintentional, but it would be very hard and resource expensive for any conference to impose 100% accuracy of this process.

At first I thought you were very happy with the double blind review process, but I soon found out that you weren't.

You have encouraged me to arxiv my work and continue to work hard to do what I think is important, rather than wait for top journals/conferences to accept. Thank you.

I like the idea to see transformer as a general version of mlp with even less inductive bias. very insightful

This video and especially the explanation about inductive biases are pure gold!

Amazing explanation especially about the comparison between transformer, mlp and cnn. Thank you so much!

Great video Yannic, explaining transformers. You were on the money about who the anonymous authors were. No surprise there. I found your video because of another paper from Google, in this case, DeepMind that also uses transformers, but in this case applied to 3D meshes. The name of the paper is Polygen: An Autoregressive Generative Model of 3D Meshes. I will be looking forward to see you reviewing this paper, if you ever do.

Is it the case that we are facing a transformer revolution in deep learning?

very interesting and informative explanation, and also a very well complement of my own understanding, thanks.

It has been difficult for me to understand the transformer until you mentioned "sets" at 7:06 halleluah. small vocabulary precision can make a huge change!

Your insights really helped me understand this paper. Thank you!

Yannic! your channel is awesome, thank you for covering so many interesting things in a nice digestable way! Stay cool

The discussion (and insight) that we've introduced these biases to simplify the Model's job and make up for inadequate training data, but in doing so have limited overall performance is incredible.

Nice explanation, thanks! For me it was even super obvious just mentioning Kolesnikov so many times, you only do that if you're Kolesnikov and you're building off of that work! It's obviously not anonymous.

It's impressive that you predicted that residual connections are going down next. I've seen multiple papers where people start improving on their complexity

This is an awesome explanation. We can learn everything from data if we have an infinite amount of data, and we can have never have infinite amount of data, we must introduce inductive bias or strong priors and try to learn the universe from limited samples.

Nice review...especially the sections after 21:00.

Never got into your videos before but your perspective is super interesting, thanks for making these videos :) I think your perspective on how we bias the models and how you view transformers to be the most general even more so than MLPs) was insightful and something I had to reflect on

no update on your channel

I want to read papers but reading paper is hard and costs so much time your videos are so fast and clear thank you my friend

Very well presented ... thank you for putting in the effort.

Thank you for your sharing, it's very useful and interesting! But I have an issue about it. My issue is, with the constant number of patches, why don't we use the naive positional encoding (0/n, 1/n, 2/n, ..., n/n)? I think if we use it, the distance between 2 different patches will not be effected by n, because n is the constant.

great video please keep on reading papers and explaining them!

'Double blind' - but only in one eye!

I wonder what would be more general than a transformer. Maybe something like:

- dynamic #convergence iterations (currently transformers do only a single projection step between layers)

- dynamic #layers

- which layers to skip to (potentially giving something like type determination where you can feed in data and if it is text it gets routed through one set of layers, if it is images, it gets routed another way or something like that)

- what amounts to wider-label attention, like, not only care about what the current and next layer of this specific neuron output/is looking for, but also their same-layer neighbours, or their equivalents from earlier/later neighbours. Not sure if that makes sense, but basically just give the soft routing mechanism more inputs to arrive at its decisions.

lol didn't mean to put hashtags like that. That was supposed to mean "number of"

The main problem with all of these ideas is the back propagating gradient descent, how should it be able to train the parameters using the loss without a bidirectional relationship between the layers? Sure, I am not saying it is impossible - there might be ways to temporarily store the ”routing” data but that would be memory consuming. All calculations needs an equivalent reverse or else you are lost regarding what weights to adjust in what direction to close in on your target output, unless you come up with a totally new concept of how to train your NN.

@@EsotericAI I mean yeah, definitely. And that's part of the reason *transformers* are so resource hungry as well, isn't it? I was just thinking about how to make this even more flexible, making use of even more patterns.

Really what I'm describing here, if all of those were implemented together, would amount to deciding what specific neural network to use based on current internal state and input. It's like having one full brain not just per task but literally per input.

It'd be the ultimate form of meta-learning, I think. And probably not something that'd ever become feasible at scale.

Kram1032 Yeah, perhaps I misunderstood your ideas. But transformers, when it comes to memory consuming, is all about storing weigths, it’s not storing temporary states for all connections in all layers during iteration (train or when sampling). When one layer is done basically all it keeps in memory is the output of that layer. Same goes for back propagating, it only keeps the gradient temporary in memory between layers. So it has no memory of what happened in one particular layer on forward when the gradient comes back and the weights is trained. The model needs to know what to do with the weights by using the gradient only, it cannot hold everything in memory what happened there for the current input and the current iteration. Any layer skips or arbitrary adjustment between nodes on the same layer must be possible to reverse engineer during back propagating using the gradient only. Well, not sure if my bad wordings here is helpful, sorry if my explainations is just confusing.

@@EsotericAI I mean, yeah. All I was trying to accomplish here is to further generalize Transformers. I make no claims about any of these actually being good or remotely accomplishable ideas.

(I think there's *some* success with the dynamic number of layers stuff in some settings at least. Not quite *truly* dynamic, but some architectures can stop evaluating early when they are "sure enough". Maybe transformers could be done that way too.)

I love your channel my friend thank you so much this channel helped a lot

Awesome, I have been wondering a while ago if the network that a network performs at each layer is not "too static". In a normal CNN the operations are completely defined by the programmer und only the weights are learned. But maybe the operations themselves can be learned as well. Seems like Transformers are going a bit in that direction if I understood everything correctly.

"Look, we know you can learn anything from data" - Yannic to MLP.

22:46 I think, that while you use the terms inductive bias and inducive prior interchangeably in this passage - you rather describe an indictive BIAS than an inductive prior. The difference would be, that an inductive prior will set a starting point until your model gets informed by your data, while the inductive BIAS inevitability limits the space of possible model-solutions. Both occur together, like the case when you bias your DL-model through its architecture while giving it a prior initialization of parameters. Have I misunderstood something? Either way: I love your videos, thanks!

Thanks for the clarifications!

I figured it out! Google must have submitted that article.

That intro was Epic :)

I wonder if the initial “down sample” to a low resolution image could be avoided (without insane compute) using a transformer of transformers. One spatial one temporal. The inner transformer (spatial) would be just like this paper on a downsampled image, but instead of downsampling the whole image you downsample a window (still to a fixed low res size). You then do this on N windows to form a sequence (sort of like your eye temporally moving around focusing on parts of the image), and transform that.

fuck it. I have nothing better to do, I'll try this out

@@SuperArjun11 do let us know

@@SuperArjun11 It's been 10 months, how did it turn out?

@@SuperArjun11 PLz respond ..

did you try

@@thiswasme5452 in a very sad turn of events, I got out of academia and into corporate before any of this was realised. Apologies, y'all 😭

Awesome video explanation 👍

Wow, thank you for the video! I'm a beginner at deep learning and had just stated reading this paper. This really helped me get started with this paper!

Not sure if I buy your argument that transformers are somehow more general and less biased than MLPs. They implement the prior of repeated computation and spatial invariance. In addition to those priors, i think the real reason transformers win over MLPs is because we given them a multiplication operation in representation space (attention). MLPs only get to perform addition of their hidden representations

If the MLP is big enough, it will always win. That's the universal approximator theorem.

Getting rid of back propagation altogether could be a "next thing". Maybe by randomly approximating the weights, maybe using some sort of very-fast-very-parallel-hardware that would update all weights at once with random values. And maybe afterwards make this process be learnable somehow :/ (maybe learnable as in disconnecting neurons that are not needed)

Maybe also having a generative neural network synthesizing datasets from a small amount of samples, so that if the model sees an input that was not in the dataset, it may have "dreamed" about it during training and be more robust.

The bogo sort approach to NNs

@@gman21xx if the hardware was built to work like this it could be very fast and very low energy. And in principle it is "guarantied" to converge _at some point_ from now to infinity XD

This paper might be onto something openreview.net/forum?id=PdauS7wZBfC

@@ptuls79 a review of that once it is published would be very cool. Would be interesting to apply to tranformers

@@gman21xx just look at the best case scenario, O(1), it is obviously the best alg for the job.

I love your take on the matter. Very eye-opening

Liked in just the first 3 minutes for the laughs on your very accurate jokes. Then I saw the entire video, I would have liked it anyway ;) thanks great work

Thank you so much for the video! It's a pleasure as always. Should we just stop ai research for 20 years and than train a Transformer net without skip connections on quantum computers with yottabytes of image data? Would save a lot of work 😜

You win machine learning!

MLP bias/prior is the connections themselves. Very Interesting. So the most general system would have the entire architecture and structure be computed on the fly.

As humans when we think of attention in vision. It is not that our eyes shut down when we are not paying attention to our surroundings. Our eyes keep running object detection/motion detection tasks with dedicated neurons. It is just the higher order functions decide not to pay attention to that already processed information and use that bandwidth on something else.

19:30 Attention is a generalization of convolutions.

10:50 I think this is equivalent to running the image through a 16x16x3x16 conv filter with stride 16. (i.e. it takes a 256x256x3 tensor and gives a 16x16x16 tensor). Then pass it through a few layers of 16x16x16x16 conv with stride 0. (i.e. a conv block which takes a 16x16x16 tensor and spits out a 16x16x16 tensor). Then a dense at the end for classification. MHA is equivalent to a full nxn conv.

20:40 A 16x16 conv2d could do the same.

Seriously though. I dont see the benefit of double blind in non-medical papers.

I think it's mentioned somewhere the 256x256 image is downscaled to 64x64 due to limits on their budget :D

And I think they are using a stride 8 in the 16x16 patches(the figure with the positional encoding show 7x7)

yep the comparisons with convolutions are really nice!

Great video. Have a quick question though, when you mention the difference between MLP and transformer, you said transformers compute w onnthe fly12:02. Doesn't w in transformer also fixed?

in MLP x_i ^{l+1} = \sum_j w_{ij} x_j^{l}, where w_{ij} are fixed don't depend on concrete input, if you try to make comparison of using attention matrix elements in this context then x_i^{l} are vectors and coefficients depend on {kq} (key, query) that change depend on the input (are defined on the fly)

The whisper part in Experimental Results got me real good )))

Thanks tons for sharing this ! you are really good at explaining papers :) I'm new to transformers so please bare w my potential noobie question. Would you say that one disadvantage of transformers (probably stemming from their general design) is that, in comparison to CNNs, larger amounts of data is required in practice to train good models? Thanks for your feedback

Have a look at compact transformers

Great video, thank you for showing the bigger picture

Another great video bro !!

Great explanation!

I really enjoying your humor! Totally random, absolutely not related to all between papers similarities comment.

Good point about convolution being an inductive bias

Having a bias but a small variance while making use of the Markov property at the same time might be the closest to human intelligence for learning the real world model. Is this what the Temporal Difference Learning discloses?

2D slices of high-dimensional loss landscapes show that skip connections make the landscape much smoother/convex. My intuition is that they will remain, but who knows?! :)

Even famous group of authors can be identified easily in open review, it probably still benefits less known authors.

It is disappointing how the presenter takes 5 minutes or so to mock the concept but does not seem to understand how this may help a small researcher from an underdeveloped part of the world. Also more steps can easily be implemented to improve the anonymity. There is nothing to mock here.

@@ilkero1067 hear hear. It came across as extremely facetious

Great insights on the transformer

Thanks for your sharing. It is very useful

Fantastic video as always

Great great video! As a PhD student, thank you for making this valuable video

Hey, what's the app you use to anotate your papers? Thank you!

He uses OneNote

In Videos you can search for a video he has where explains all tools and process he uses. here i have linked for your easy reference two of his very generous sharing: Yannic’s How to read research papers ua-cam.com/video/Uumd2zOOz60/v-deo.html

Yannic’s How I make my videos ua-cam.com/video/H3Bhlan0mE0/v-deo.html

Yannic’s How to read research papers

ua-cam.com/video/Uumd2zOOz60/v-deo.html

Yannic’s How I make my videos

ua-cam.com/video/H3Bhlan0mE0/v-deo.html

We are so close to this being a reality friends. Hyperealistic images from a simple definition. GptI was really promising, imagine the quality if a model the size of gpt3 was trained to do it.

Nice to meet another Yannick! Would be interesting to apply transformers to activity detection.

Excellent video summary! Can you explain why you think Transformers is a 'more general' architecture? It seems to me that it has more 'inductive biases', but would that not imply less general? What is it about transformers that make it more general than MLP?

the re-computation of weights at every forward pass

Authors: Let's be anonymous and make the readers go nuts.

Kilcher: 🤭

anonymity is required for papers currently under peer-review.

What do you mean by these connections in transformers being computed on the fly? Training time?

I don't think skip-connections are as biased as you claim. The visual intuition is: you might recognize an alligator by the texture of its skin combined with higher order features like the ensemble of shapes that make up its body. But the intuition can be generalized beyond just the visual domain. Sometimes a combination of low-level and high-level features is more informative than just high-level features on their own.

If your language model is reading some Eminem lyrics and it outputs only high-level features like the subject-matter, sentiment analysis, theme, etc. It might classify it as Country Western if it abstracts away the low-level features like syllables and rhyme.

Can you do a python implementation of this model using some dataset and use the model for inference?

Great explanation, But I'm still confused about 1 thing. Tried reading the paper but didn't really click still. Question is regarding "extra learnable [class] embedding". Can someone give some examples as to what the input is to this embedding is? Is the input going to be the same special token (*) for all input samples or is the class label going to be added here? (The latter seems not very sensible), but whats the point of having an learnable embedding if the input is always static?

yes exactly, it's the same token always

Good explanation. Disagree with the opinion on residual connections being an inductive bias. Without residual connection, a layer might not be able to learn an identical mapping because of non linear transformations in the layers.

Which program are you using to annotate your papers?

24:18 "Bias -- in the statistical sense". I see someone has learned from Yann's twitter feud 😬

Hi, in the video you claim that you can not get the relation objects far away with CNN (in 21st minute).

But when we put fully connected layers after flattened the CNN layers we should be getting all combinations of those features regardless how far they are, am I wrong?

What does this mean for cases with little data though? Are conv NNs and LSTM still good because of their inductive priors? Or could you create even better inductive priors using information from other datasets? Let's say you want to classify cats and dogs, you could infer from images of houses, streets, cars, and so on that there is a general theme of spatial correlation (like conv NNs assume), and maybe even do better than that at generating an inductive prior.

I think especially in the low data regime, models with inductive priors will always remain useful. Like 95% of real world ML problems are solved by linear regression.

18:14 so fun :D

I'd be open to an ASMR paper review from Yannic lol

@@lucidraisin I came down to the comments just to say this! Haha!

An unrelated question but... What program is this that you are using to view and have this side references? I need a tool like this!

OneNote, it's just a copy paste on the side.

I think transformers are more general simply because they take into account the lateral connections between the neurons. This is what self-attention does in principle. The human brain has lateral connections among the neurons too.

What's means by lateral connection ?

Brilliant best ever explaining

Now what about the attention of the pixels in the same patch between eachother?

Hello Yannic , in 25:38 , you tell that the connections in a transformer are calculated on the fly. Aren't the connections in an MLP also calculated on the fly? Also , can you tell me how long is one tpu-v3 day ? Thanks in advance.

No, the connections in an MLP are the learned weights: they are learned during training and then remain frozen during inference.

@@theomorales5040 Thank you for replying. Now I'm clear about this

@@theomorales5040 Any idea how much long is one tpuv3-day?

@@hareshindrajit Precisely 1 day using 1 TPUv3.

@@theomorales5040 Okay. That makes sense 👍

Could you explain a little more about how Transformers are a generalized version of MLPs? Aren't the weights of MLPs also found on the fly?

I'm not too sure about this, correct me if I'm wrong here:

In MLPs, there isn't a choice to attend to a node from the previous layer just that the weight decides how strong the attention is. Transformers can choose to skip a node they don't want to pay attention to altogether.

Hi Yannick, I love your videos because you always ask a lot of questions and not just read the paper. If I understood correctly, when you say, transformers are more general because other than feed forward connection, we have self-attention where we calculate the node/neuron values each time before feed-forward. Could you please elaborate a little why this is more general than MLP? Shouldn't MLPs be able to figure this out because of their complete connection given enough data and self-attention is another inductive bias in a way?

The thing with traditional fully-connected layers is that the weights are fixed by position. For example, if you swap 2 words in a sentence, the output vector of any given layer will change (FCs are not invariant to permutations) This could make sense in some cases, where the order of those 2 words affect the meaning of the sentence, but in other cases the order should not matter, thats what the attention layer is learning. Of course, you could train a MLP with a lot of combinations like you said, but that would use many more parameters, and I believe that in practice you just can't match the transformer's performance.

Is there a paper that tries to apply attention to which layers should skip forward? Like attention for densenets but not necessarily with the cnn part

Btw great video as always, thanks for all your work

Yannick is at his best when he goes hard with the shade :>

Does the fact that I can't read your username mean I'm a robot? :(

@@nineteenfortyeight6762 Not even, any self-respecting model trained on MNIST would be able to 😌

Please tell captcha

I would actually consider the transformer as a regularized MLP (i.e., a special case of MLP, not the opposite way around). The weights that connect nodes between layers are regularized by the similarities of the connected nodes.

27:28 "There can be something even more general than the transformer" - Yes and that something is called a GNN!

Check out this blog Yannic: thegradient.pub/transformers-are-graph-neural-networks/

And thanks for the video! I saw the paper the same day that Karpathy tweeted it.

Edit: forgot you made this one back in the day when GNNs were not as popular.

You have a great channel too

Great explanation

So... how does this compare to adding 'zoom'/'pan' functions, adding xyz+self embeddings, and letting a transformer tell a single 16x16 fully connected layer where to look? Did I beat google?

Wouldn't the most general learning system be a fully connected graph with something like an FIR filter for each connection instead of weights?

true

Can we use bert for video classification? If yess then please share some resources

Bert is a transformer model trained on texts. There will be architectures similar to this paper for videos in future

do you guys think that this method so similar to the NON LOCAL