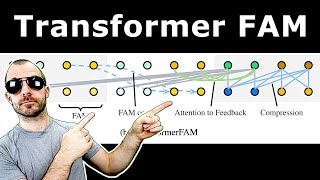

Leave No Context Behind: Efficient Infinite Context Transformers with Infini-attention

Вставка

- Опубліковано 23 кві 2024

- Google researchers achieve supposedly infinite context attention via compressive memory.

Paper: arxiv.org/abs/2404.07143

Abstract:

This work introduces an efficient method to scale Transformer-based Large Language Models (LLMs) to infinitely long inputs with bounded memory and computation. A key component in our proposed approach is a new attention technique dubbed Infini-attention. The Infini-attention incorporates a compressive memory into the vanilla attention mechanism and builds in both masked local attention and long-term linear attention mechanisms in a single Transformer block. We demonstrate the effectiveness of our approach on long-context language modeling benchmarks, 1M sequence length passkey context block retrieval and 500K length book summarization tasks with 1B and 8B LLMs. Our approach introduces minimal bounded memory parameters and enables fast streaming inference for LLMs.

Authors: Tsendsuren Munkhdalai, Manaal Faruqui, Siddharth Gopal

Links:

Homepage: ykilcher.com

Merch: ykilcher.com/merch

UA-cam: / yannickilcher

Twitter: / ykilcher

Discord: ykilcher.com/discord

LinkedIn: / ykilcher

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: www.subscribestar.com/yannick...

Patreon: / yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n - Наука та технологія

I can't tell you how much I love these paper reviews.

Me too. I also really would like to see videos on older papers and in what open models those algorithms got implemented.

So you have actual examples on implementations and you can see if you understand something.

His sarcasm is delightful

Ha ha ha. The RNN bit in the beginning nailed it. But hey, it was and still is a good idea.

That intro was pure gold xD

Oh nice! read this paper last week, currently trying to replicate it for a home project. Interesting of note is that there have been several papers linking hopfield networks with attention mechanisms recently - if I understand it right storing new KV pairs into the compressive memory is effectively the same as storing additional patterns in a hopfield network/associative memory. Querying the memory is the same as allowing a state pattern to evolve to a fixed point attractor (which are the stored memories in this case). everything is connected man.

Everything is connected man

The connection between attention and hopfield networks is intriguing!

Thank you for explaining RNNs!!

Always good to have a recap of a relic from ancient history

😂😂

Man, he really destroyed the paper. I didn't notice the obvious flaws in the method during my first read of the paper, but this video convinced me that Infini-attention is not a notable improvement of any sort. Really entertaining.

Where did he destroy the paper? All he said is the method is limited by the limitation of linear attention mechanism. The method however still contains novel aspacts and show performamce improvement. Maybe, the intrinsic recurrent mechanism is not very novel, but its utilization of memory in the 'neat' way throughout whole layers looks indeed interesting, at least personally.

He didn't destroy the paper, he is just skeptical, because this relies on approximation of approximation to work.

My perfect morning goes like this. Wake up, get a cup of coffee, and watch Yannic review a paper adding his commentary. Perfection!

I really appreciate the paper reviews. And the reminder to stay hydrated!

Thanks for this content, some of the best on youtube. Keep it up!

I would love to see reviews of old-mythical papers too!

Thank you so much for this. I don't always need help with a paper, but when I do, it is a blessing to have someone 100x more knowledgeable than me explain the context.

Brilliant and fun video

Great video. Well explained.

TIL about associative memory! It's such a cool idea!

Awesome explanation!! Sarcasm too!!

Looking forward to seeing your analysis of the FAM-transformer architecture.

I get to learn a lot from you, Thank you,

Incredible.

Thanks !

if my memory about this were correct, infinite attention was first introduced by Vaswani in 2022. It's in fact the dynamic model which could update constantly but 114x compression comes at expense of layers of complexity.

The shade 😆

I personally think the memory part is kind of a "semi gradient" thing, similar to the concept we used in DQN, since it is going to store context over very long text, if the memory part still holds gradients it will get harder and slower to train as the text goes longer. So, once context is accumulated into memory, regard it as constant vector to serve the down streaming calculation, which is scalable.

Correct me if I am wrong.

i love your content habibi

after having read the mamba papers and abstract and conclusion (without anything else) of this paper I too was drawn to drawing an RRN for no reason. :D

You are so funny mate! Seriously

Thanks a lot for the content. I share your scepticism. I think infinite attention needs to come from some sort of hierarchical tokens which are learned at different levels of the transformer. With a large receptive field far into the past for tokens high up. And with high level tokens spread out thousands or millions of tokens apart. This way, attention between high level tokens can and must span entire disciplines.

The benchmark should be book-length stories with facts introduced at the beginning and combined with events towards the end. Make for a great kind of benchmark too ...

I think it is a flaw in the current transformer architecture that all layers have the same receptive field which is the input context window. The MLP layers could be used to thin them out and merge with thinned out past content from X regression steps ago. X could increase like a clock where high layers clock in days and low layers clock in seconds. Of course, needs a logarithmic generalization of the positional embedding. But that should be quite feasible.

Sounds like instead of an encoder-decoder architecture this would be a “many encoder”-decoder architecture?

Isnt RWKV tried a similar idea with their 'token shift', so later layer could 'see' more tokens? It reminds me of CNN to some degree. However, its field does not span that long, def not up to a book length, but the concept is there?

Yannic somehow missed the 1B token context paper "LongNet: scaling transformers to 1000 000 000 tokens". It uses a clever dilation scheme to keep matrices manageable.

Somehow it didn't catch up, maybe accuracy proved to be insufficient.

Glad to see Kitboga finally embracing AI

Bro 😂

RNNs not dead yet!

Thx!

It is my intuition that if increasing the size of the input prompt is an impossibility some sort of compressed memory of past tokens that are no longer part of the input would be required. I can imagine a GP3 size neural network whose only job is to roughly "remember" what's been said before the current prompt and then have it's higher layers of abstraction somehow connected to the higher levels of the language model so that it influences the output in a very abstract semantic form. Ideally a model would be capable of reconstructing past prompts from this abstract memory with high accuracy .

When you explain attention and compare it to a classical network you say that the "weighted sum" is computed "dynamically" vs "statically".

I don't understand what you mean by that. I've heard many explanations of attention, but its always good to hear new ones.

Could you clarify what "dynamic" means in this context?

FWIW, TransformerXL actually does work. And it works really well. It's just... not a recurrent technique. What it *does* do is condition the model for sliding window inputs, which actually negates the need for attention sinking! I've been using the TransformerXL training style for the past year and when combined with RoPE it allows a model with 2k context + 2k memory to extrapolate to 4k context at inference, with only half the training cost of actual 4k context training because our attention matrix is a rectangle rather than a square.

What about doing the exact the same thing, but combined with MOE ?

Basically selecting the long linear term memory or the short term one at each transformer block ?

please do more paper reviews!

Different prompts require different context extension. It's easier to think about this in token space. For example, natural language can easily be downsampled to an arbitrarily short summary, so there's a lot of scope for summarisation with natural language. But it doesn't work so well for code because code really needs precise long-range attention: if you prompt a very large interface declaration and you want to generate code that calls that interface, what you need is windowing instead of downsampling: the parts of the interface that are not relevant to the current input (not prompt) are discarded and the parts of the interface that are relevant are preserved in full. So I think the problem is trying to find a one-size fits all method when actually there are different "views" of a prompt that may be useful to different inputs.

I think code can also be thought of like that, as we humans can often think of code, which is not spaghetti code, as blackboxes with specific ins and outs.

Would it be possible to make some sort of LLM-NeRF hybrid kinda thing that has an abstract "mind-palace", and distant/less important concepts/memories are inherently convolved by perspective into simpler/more general concepts that occupy less space in the memory used for the current "view", concepts are combined by tracing thru them like they are semi-transparent, and meaning can be changed by the direction things are looked at, and there is some sort of warping ability, refraction, gravitational lensing, wormholes etc, some sort of space-warping analog, to bring together distant things in new ways, and different "regions", "objects" etc could be streamed from disk when they're "in-view" or otherwise influencing the current "view"?

Or do I just sound like I ate some strong shrooms? Or is this actually already how things work, and it's just not interpreted this way in normal explanations?

I thought about the same thing for time series modeling like 12 years ago... lol

@@axe863 How would this apply to time series?

I can see state space model do this.

💩

What do they use in Gemini 1.5 to process 1M and 10M contexts? It has to be something like this, right?

Unless it's some misdirection and they use a more powerful mechanism.

In the past the problem with RNNs was that the systems were forgetting earlier tokens too quickly. Attention was invented specifically to remedy this. But maybe once somebody figures out how to train them properly, we will get back to "RNN is all you need."

The small problem may be that you can't fit an infinite amount of data in a finite amount of memory?

@@clray123 Whether you structure it as a transformer or as some more generic architecture, any system is finite.

The obvious assumption is that this is what they used in Gemini 1.5. Am I wrong?

Yes I believe this is the consensus view, don't think they have explicitly confirmed that though

I wonder if incorporating a mathematical model like adaptive compression algorithms, which could dynamically adjust compression ratios based on the entropy of input sequences, might optimize memory utilization. Additionally, exploring non-linear transformations within the attention mechanism could potentially enrich the model's capacity to capture complex dependencies. 👍

I thought SSMs already resolved the scaling problem. Just use Mamba Modules + Attention Modules. Why bother with linear attention?

Lol Sparse Stacked Learners ... imperfectly correlated errors + high performing base models will always between a single model/method

@@axe863 ?

Hey, convolutional networks are attention networks too, and they accept input with infinitely large spatial dimension

I hope it is true. But what about performance and memory demand?

What I really miss is massive context. I run out of any context window I get way way to fast.

Isn't it kinda like Mamba, where we create a space state that stores all the long memories and use it for the next gen? It's like a beefed-up RNN with a larger hidden space that keeps on adding new memories.

i don't understand the math but i enjoy your drawing it is very recurrent

i like your "unrelated" sketching man, feel like being human by kinda a bit distracted. but i think there always some value when the urge to do that.

Watch till the end, he's very clever!

@@JorgetePanete got it, bro! just edited it

I love you man 🤣

6h of sleep is not nearly enough to process this.

Hmmm

I wonder if there's a fundamental limit to how long of a context an LLM can be coherent over.

can it be predicted like the scaling laws?

Uh IIRC information theory is rather definite about how many different messages you can store given x bits of storage...

it’s just like the human brain. You don’t get quadratic retrieval time as you store new information. Old things just get blurrier in your head.

It'd be awesome if at 12:15 you could walk through that inner product kernel math if possible. I have a long standing difficulty intuiting matrix maths vis à vis the concept os what it's doing to move one value space to another. There must be a paper on it we could walk through if you're not fully comfortable with the math too 😜

Your fans are so demanding lol

To me, it seems like the computation done here is ultimately more similar to linear attention than rnn, since you’re just adding to the memory instead of applying a transform. Have people tried just sticking an actual RNN onto a transformer? And you can incorporate one of various ways to prevent exploding/vanishing gradients, maybe even an LSTM.

"Have people tried just sticking an actual RNN onto a transformer?"

There is RWKV, "Reinventing RNNs for the Transformer era"

I wonder if dot product attention is supreme in context of accuracy? every other linear attention tries to approximate it

Just have infinite attention?! My god, how did I not think of that!?!

"We find a way to make the memory of RNN larger and 2D". That is what I think, and maybe I am wrong.

Transformer-XL explanation is inaccurate, it doesn't only save the last state but every key, value from the last iteration and those can be attended to in the current execution cycle as long as it's inside the attention window of the actual token that is being processed. It works pretty well even if it has its limitations (it cannot learn to store information for only long term usage).

thank you for the rewiew, im too stupid to understand such papers

The audacity of not considering the (substantial) prior work on RNNs as related 😂

I feel smart for a few fleeting minutes...

Perfect to fall asleep to

Isnt compressive memory what MAMBA is?

Your critique that it has the detriments of RNNs without the benefits made me wonder if one could make such an RNN-based attention scheme

the point is that transformers are purposely not trained with bptt because that would slow down training and introduce vanishing/exploding gradients. so there is no free lunch. the bests would be a gated memory transformers e.g. an lstm like mechanism that learns only from small chunks the memory retrieval and uses for the larger potion no learning but only memory retrieval

@@TheRohr or one could use one of those newer linear RNNs that can be trained in parallel, such as RWKV

@@geraldkenneth119 they are still a compromise because there is no dynamic but only static knowledge stored

i guess they feel the linear attention's deficit is made up for by the memory mechanism, but i think the memory mechanism is probably insufficient because of reasons you mentioned, namely it's not learnable

I'm so confused why you suddenly started talking about RNNs for no reason.

Well, the infini-transformer has the same drawing as the RNNs thats why its was a foreshadowing ;)

Watch till the end!

😂

I'd love to watch this but I'm afraid I can't yet pay QKV :P

Softmax that, bro

10:33 LOL

Sweet! Now it can have infinitely shitty results! How exciting

How do I level up to understand this?

Read "Understanding Deep Learning" by Simon Prince, it's available freely :) Should be easy to find - UA-cam doesn't like random links in comments...

they will get Schmidhubered

Yep, you can see Schmidhuber right in the paper at 34:24 of the video. He told us he invented everything, we should have listened!!

No one escapes the Schmidhuber 😎

Thank you for some good laughter :)

I dont know, just ask chatGPT to compress your past sequence :)

Imagine while testing in the beginning you've said something bad. After quite some time you might've forgotten but the AI is planning a revenge.

There is an important element of chronology that seems to be missing in their strategy. The fact that they intentionally remove repeated info seems to drive that home. As if things happening more than once isn't relevant... maybe I'm not understanding but this paper seems way off.

Why not look at the results? that would seem an obvious gauge of merit unless the metrics are bs or lies

Yannic waits for independent verification. No one puts bad benchmarks in a paper...

LFG

Why isn't it called Infinittention???

Scientists are bad at advertising...

linear attention aka _"I invented transformers in the 90's"_ 😂

Breaking news: AI scientists invented jpeg

jesus christ. go over the results. see where the results hold and where they fall down. If somebody told me transformers were the key to LLMs, I too would have thought the paper results were nuts, but it turned out my intuition was faulty.

TLDR - its compression lol

context translator

Whenever someone in IT uses the word „infinite“ I am very skeptical. Because nothing is infinite.

" "*

What you call inner product mathematicians call outer product. Just a small comment while continuing to watch)

hahahha, really RNN is what we are doing right now...

😂 mustve lost a bet

Sorry. Too late at night for me. Lost it when the ads cut in!

3rd comment

To you people saying "first comment": Are you a five year old child? Are you in the wrong place maybe?

😆 Why aren't we allowed to be happy about anything going well in our lives?

Maybe we should rejoice that kids are watching an AI paper analysis video

You're just jealous you're last.

The world need more 5 year old kids who consume SOTA research in ML 😂

Fifth

FIRST!!!!!!!!!!!!

First Comment

7th comment