LSTM is dead. Long Live Transformers!

Вставка

- Опубліковано 1 тра 2024

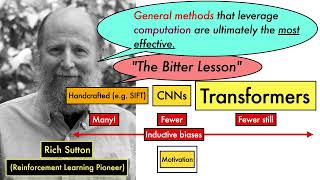

- Leo Dirac (@leopd) talks about how LSTM models for Natural Language Processing (NLP) have been practically replaced by transformer-based models. Basic background on NLP, and a brief history of supervised learning techniques on documents, from bag of words, through vanilla RNNs and LSTM. Then there's a technical deep dive into how Transformers work with multi-headed self-attention, and positional encoding. Includes sample code for applying these ideas to real-world projects.

- Наука та технологія

Good to see Adam Driver working on transformers 😁

Thank you for this concise and well-rounded talk! The pseudocode example was awesome!

That's one of the best deep learning related presentations I've seen in a while! Not only introduced transformers but also gave an overview of other NLP strategies, activation functions and also best practices when using optimizers. Thank you!!

I second this! The talk was such a joy to listen to

in about 30 minutes!!!!

:¥£€€’

Agree -- I've watched half a dozen videos on transformers in the past 2 days, I wish I'd started with Leo's.

The best presentation/explanation to the topic I have seen so far. Thanks a lot :)

For anyone feeling overwhelmed, it is completely reasonable, as this video is just a 28 minute recap for experienced machine learning practitioners, and lot of them are just spamming the top comments with "This is by far the best video", "Everything is clear with this single video" and all.

Sounds like it is my lucky day then, for me to jump from noob to semi-non-noob by gathering thinking patterns from more-advanced individuals. I will fill in the swiss cheese holes of crystallized intelligence later by extrapolating out from my current fluid intelligence level... or something like that. Sorry I'll see myself out.

I was about to make a remark about the presenter speaking like a machine gun at the start. I can't even follow such a pace even in my native language, on a lazy Sunday afternoon with a drink in my hand. Who cares what you say if no one manages to understand it??? Easy, easy boy... slow down, no one cares how fast you can speak, what matters is what you are able to explain. (so the others understand it).

@@svily0 >I can't even follow such a pace even in my native language

maybe that's the issue?

@@user-zw5rp7xx4q Well, could as well be, but on the fringe side I have a masters degree. Could not be just that. ;)

This is by far the best comment, Everything is clear after reading this single comment! Thank you all

This finally made it clear for me why RNNs have been introduced! thanks for sharing

This is like 90% of what I remember from my NLP course with all the uncertainty cleared up, thanks!

Thanks for this! It gets to the heart of the matter quickly and in an easy to grasp way. Excellent.

World deserve more lectures like this one. I don't need examples on how to tune U-net, but the overview of this huge research space and ideas underneath each group.

Leo is an excellent professor. He explains difficult concepts in an easy-to-understand way.

Great talk. It's always thrilling to see someone who actually knows what they're supposedly presenting.

I love this presentation

Doesn't assume that the audience knows far more than is necessary, goes through explanations of relevant parts of Transformers, notes shortcomings, etc;

Best slideshow I've seen this year, and it's from over 3 years ago

Excellent presentation! Perfect!

Thank you for sharing it. Really helpful!!

this is easily the best NLP talk ive heard this year

This is beautiful. Clear and concise!

Best transformer presentation I’ve seen hands down. Nice job!

Such a useful talk! TYSM 🤗

I was trying to use similar super-low frequency sine trick for audio sample classification (to give network more clues about attack/sustain/release positioning). Never did I know, that one can use several of those in different phases. Such a simple and beautiful trick

The presentation is awesome

More vids please this was really informative on what actual SOTA is

This was fantastic. really well presented.

Excellent talk. Thank you @leopd !

This helped me a ton to understand the basics. Thanks!

Wonderful and educational, value to those who need it!

Thanks! Really good compare/contrasting.

very impressive presentation. thank you.

Great presentation!

Great Presentation!

All i want is his level of humbleness and knowledge

Don't just want, make it happen than. You could literally do this

Find the humility to get your head down and acquire the knowledge. Let the universe do the rest.

Its hard to overstate just how much this topic has(is) transformed the industry. As others have said, understanding it is not easy because there are a bunch of components that don't seem to align with one another and overall the architecture is such a departure from the most traditional things you are taught. I myself have wrangled with it for a while and its still difficult to fully grasp. Like any hard problem, you have to bang your head against it for a while before it clicks.

"has(is)"??

One of the best talks on Deep Learning!...thank you

Amazing presentation

Excellent talk. Kudos!

This was amazing.

12:56 the review of the pseudocode of the attention mechanism was what finally helped me understand it (specifically the meaning of the Q,K,V vectors), what other videos were lacking. In the second outer for loop, I still don't fully understand why it loops over the length of the input sequence. The output can be of different length, no? Maybe this is an error. Also, I think he didn't mention the masking of the remaining output at each step so the model doesn't "cheat".

for every word we compute its query, key and value vectors, so we need to loop through our sequence

What a great explanation, thank you.

hkj678aTY656S\]paxz dAESAZ RS

interesting looks a lot like my signal class. how to implement various filters on a dsp.

Wow... that was a quick summarization of all the NN research things in past many decades...

Clear, precise, fluid thank you!

Great presentation.

Great summary! Wonder if you have a collection of talks you give on similar topics ?

Well done and thank you

Wonderfully clear and precise presentation. One thing that tripped me up, though, is this formula at 4 minutes in:

Hi+1 = A(Hi, xi)

Seems this should rather be:

Hi+1 = A(Hi,xi+1)

which might be more intuitively written as:

Hi = A(Hi-1,xi)

This is one of the clearest and most informative presentation about nlp models and their comparison. Thank you so much.

This talk is awesome!

This is hands down the best presentation on LSTMs and Transformers I have ever seen. The speaker is really good. He knows his stuff.

This was more than meets the eye

Amazing talk. It would be of great help if you can post link to the documents.

He is incredible

One of the best presenters

Clear and concise👍

RIP LSTM 2019, she/he/it/they would be remembered by....

Not everyone will get this

Still, LSTM works better with long texts. It has its own use cases.

@@dineshnagumothu5792 you obviously didn't get it. it is "DEAD", lol. RIP LSTM.

Simply Wow!

You folks need to look into asymptotics and Padé approximant methods, or for functions of many variables as ANN's are you'd use the generalize Canterbury Approximants. The is not yet a rigorous development in information theoretic terms, but Padé summations (essentially repeated fraction representations) are known to yield rapid convergence to correct limits for divergent Taylor series in non-converging regions of the complex plane. What this boils down to is that you only need a fairly small number of iterations to get very accurate results if you only require approximations. To my knowledge this sort of method is not being used in deep learning, but has been used by physicists in perturbation theory. I think you will find it extremely powerful in deep learning. Padé (or Canterbury) summation methods when generalized are a way of extracting information from incomplete data. So if you use a neural net to get a few first approximants, and assume they are modelling an analytically continued function, then you have a series (the node activation summation) you can Padé sum and extract more information than you'd be able to otherwise.

Great video. Thanks

This is by far the best video. Ever.

where can I find the presentation doc of this talk amigos? thanks

this is awesome!!! thank you

Very good presentation

Great stuff.

This is outstanding!

Best Transformer explanation ever.

-sorry for the lack of technical terms- I did not completely get it how transformers work regarding to positional information: Isn't X_in the information of the previous hidden layer? That is not enough for the network, because the input embeddings lack any temporal/positional information, right? But why not just add one new linear temporal value to the embeddings instead of many sinewaves at different scale?

Amazing video, I feel like I actually have a more concrete grasp on how transformers work now. The only thing I didn't understand was the Positional Encoding but that's because I'm unfamiliar with signal processing.

Nice video but forced to watch on 2x speed trying not to fall asleep

Great presentation. Thank you!

This is brilliant.

Very nice recap of Transformers and what sets them apart from RNNs! Just one little remark, you are not doing things in N^2 for the transformer since you fixed your N to be at maximum some sequence length.

You can now set this N to be a much bigger number as GPUs have been highly optimized to do the according multiplications. However, for long sequence lengths, the quadratic nature of an all-to-all comparison is going to be an issue nonetheless.

@10:30 - Attention is all you need -- Multi Head Attention Mechanism --

Very well explained... Love it.

A great introduction to transformers.

Does the multi-headed attention + position encoding work equally well and better than plain vanilla LSTM but on numeric input ( float or integers ) vectors / tensors ?

Your input is highly appreciated

Not an expert here, but the way attention works is closely tied to the way nearby words are relevant to each other: for example, a pronoun and it's relevant noun. Multi-headed attention would identify more such abstract relationships between words in a window. So if the numeric input seq has a set of consistent relationships among all its members, then attention would help embed more relational info on the input data so that processing it becomes easier when honouring this relational info.

I've always wondered how standard Relu's can provide non-trivial learning if they are essentially linear for positive values? I know with standard linear activation functions any deep network can be reduced to a since layer transformation. Is it the discontinuity at zero that stops this being the case for Relu?

Exactly. Think of it like this. A matrix-vector multiplication is a linear transformation. That means it rotates and shifts its input vector. That is why you can write two of these operations as a single one (A_matrix * B_matrix * C_vec = D_matrix * C_vec) and also why you can add scalar multiplications in between (which is what linear activation would do, and is just a scaling operation on the vector). But if you only scale some of the entries of the vector (ReLu) that does not work anymore.

If you take a pen, rotating and scaling it preservers your pen, but if you want to only scale parts of it, you have to break it.

@@lucast2212 Cheers! good explanation, thanks.

Presentation: perfect

Explanation: perfect

me (every 10 mins): " but that belt tho... ehh PERFECT!"

Amazing!!!

Four years ago! Shocking.

I have a question: is it possible to use those SoA models shared in the very end od the presentation as document embeddings? Something analog to doc2vec. My intent is to transform documents into vectors that well represents them and would allows me to compare the similarity of different documents

Absolutely yes.

Thank you...

impressive!

Thank's so much for video . can'i ask some one if he know where i can find a pre-trainded modele to identfiy number in Image that are from 0 to 100. No writied by hand specialy and can be any where position in image ?

Thank's for adavance.

Great ! Thanks

Thankyou!

question regarding 26:27

so if i plan on analysing time series sensor data should i stick to LSTM or is the transformers model a good choice for time series data?

I could use an answer to this question as well

@@isaacgroen3692 Damn I have the same question

U need to use LSTM for time series.

Bcos in transformers, it's all about attention or positional intelligence which has to be learnt.

Whereas in time series, it's all about the trend and patterns which requires the model to remember a complete sequence of data points.

@@abdulazeez7971 thanks for the info :)

The primary advantages and benefits from the transformer are the attention and positional encoding, which are quite useful for translation because the grammar differences in different languages may cause the disorder of the input and output words. But for time series sensor data, they are not disordered (comparing output with input)! RNN, such as LSTM is a suitable choice to perform analysis for such data.

20:00 If I multiply a small scaling factor λ₁ (e.g. 0.01) to the output before feeding to activation function, sigmoid will be sensitive to difference between, say, 5 and 50. Similarly, if I multiply another scaling factor λ₂ (e.g. 100) to the sigmoid output, I can get activated output ranging between 0 and 100. Is that a better solution than Relu, which has no cap at all?

The problem with that approach is that in the very middle of the range the sigmoid is almost entirely linear - for input near zero, the output is 0.5 + x/4. And neural networks need nonlinearity in the activation to achieve their expressiveness. Linear algebra tells us that if you have a series of linear layers they can always and exactly be compressed down to a single linear layer, which we know isn't a very powerful neural net.

@@LeoDirac Relu is linear from 0 to ∞

@@xruan6582 Right! That's the funny thing about ReLU - it either "does nothing" (leaves the input the same) or it "outputs nothing" (zero). But by sometimes doing one and sometimes doing the other, it is effectively making a logic decision for every neuron based on the input value, and that's enough computational power to build arbitrarily complex functions. If you want to follow the biological analogy, you can fairly accurately say that each neuron in a ReLU net is firing or not, depending on whether the weighted sum of its inputs exceeds some threshold (either zero, or the bias if your layer has bias). And then a cool thing about ReLU is that they can fire weakly or strongly.

Relevance is just how often a word appears in the input?

NM on this. I looked it up.

The answer is similarity of tokens in the embedding - ones with higher similarity gets more relevance.

When I want to use transformers for time series analysis while the dataset includes individual specific effects. What do I do? In this case the only possibility would be to match the batch size with the length of the individual data length? Right?

No, batch and time will be different tensor dimensions. If your dataset has 17 features, and the length is 100 time steps, then your input tensor might be 32x100x17 with a batch size of 32.

Awesome!

great talk!

This aged like fine wine.

Do these transformers also work well for timer series predictions? I am working on air pollution predictions and would like to try out these transformers in some keras architecture for that application if the architectures are available somewhere. tnx

I haven't seen this tried in the literature. But transformers sure should work for time-series analysis - no reason they shouldn't to my eye. You might have to do the positional encoding yourself though - not sure if the Keras blocks do that for you.

@@LeoDirac Thanks for the hint! In case you have used yourself some architecture for time series forecasting that is available on github or somewhere else, I would appreciate knowing about it!

perfection

Was curious about machine learning and feel like I'm getting a lesson on how to speak in heirogliyphs.

Dude, can you share your PPT or PDF. Thanks in advance!

Excellent vid, am wondering about a point made around 22:00 about SGD being "slow but gives great results."

I was under the impression that SGD was generally considered pretty OK w/r/t speed, especially compared to full gradient descent? Maybe it's slow compared to Adam I guess, or in this specific use-case it's slow? Perhaps I'm wrong. Anyways, thanks for the vid!

I was really just comparing SGD vs Adam there. Adam is usually much faster than SGD to converge. SGD is the standard and so a lot of optimization research has tried to produce a faster optimizer.

Full batch gradient descent is almost never practical in deep learning settings. That would require a "minibatch size" equal to your entire dataset, which would require vast amounts of GPU RAM unless your dataset is tiny. FWIW, full batch techniques can actually converge fairly quickly, but it's mostly studied for convex optimization problems, which neural networks are not. The "noise" introduced by the random samples in SGD is thought to be very important to help deal with the non-convexity of the NN loss surface.

Uploaded a month ago but has just 150 views and just 24 subs? WTH?

@@vothka205 But ML uses cats and dogs too!

1. @10:17, the speaker says all we need is the encoder part for classification problem, is this True? How about BERT, when we use BERT encoding for classification, say sentiment analysis, all that has worked was the encoder part?

2. @ 12:25, the slide is really clear in explaining relevance[i,j], but the example is translation, so clearly it is not on the "encoder part". In the encoder part, how is relevance[i,j] computed? what is the difference between key and value? It seems they are all values of the input vector? Aren't they the same in the encoder part?

Thank you!

Good question...Key and Value seems symmetric. I was expecting symmetry in a self-attention model, but I can't quite understand how this works with the key/value analogy.

how do transformers deal with too large inputs? for example if you want to process an entire book? is there still some memory or autoregressive setting with transformers?

Short answer is you can't. Long answer is people are working on it - don't have any research papers handy, and I don't think there's consensus. But fundamentally this remains one of the key limitations of transformers - they don't work on very large documents. In practice, the size of documents they do work on is big enough for most any problem. I mean if you really want to (a.k.a. have a big team of smart engineers), nothing's stopping you from building a giant cluster implementation where each node is handling its own set of tokens, but dear god it would be slow. Bandwidth inside a GPU is ~terabyte / second, while most datacenter networks can't do more than a couple gigabytes/second.

@@LeoDirac thanks for the (short and long) answers. I have seen recently publications aiming at reducing the quadratic complexity like Transformers are RNNs (arxiv.org/abs/2006.16236). I guess transformer really solve how to route information accurately, but not how to compress memory, nor how to use past tokens out of reach for gradient-based optimization (perhaps this is badly formulated).

good video!

6:41 hahaha this is GODLIKE! The fact that Schmidhuber is on there makes the joke even better!

I need that belt.

at 5:40, I think determinant is right word instead of eigen value...