DeepMind x UCL RL Lecture Series - Model-free Control [6/13]

Вставка

- Опубліковано 20 тра 2024

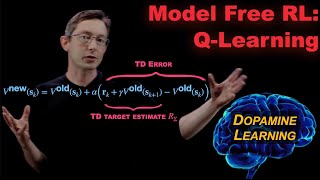

- Research Scientist Hado van Hasselt covers prediction algorithms for policy improvement, leading to algorithms that can learn good behaviour policies from sampled experience.

Slides: dpmd.ai/modelfreecontrol

Full video lecture series: dpmd.ai/DeepMindxUCL21 - Наука та технологія

![DeepMind x UCL RL Lecture Series - Function Approximation [7/13]](http://i.ytimg.com/vi/ook46h2Jfb4/mqdefault.jpg)

![DeepMind x UCL RL Lecture Series - Function Approximation [7/13]](/img/tr.png)

I really appreciate Hados teaching style demonstrated in the past 2 videos. There's some things I struggled to understand about the core concepts of various reinforcement learning aspects/math formulations, some of these confused concepts were introduced in the first lectures.

I realize without working through a lot of examples myself or really thinking about all the implications I'd never have a strong intuition of the learning material and it would be an impediment to learning further material.

But Hado's consistent review and focus on explaining different important nuances not self evidently clear by just looking at various formulations, really strongly enhanced the growth of a robust useful clear intuition over these concepts, the type of intuition necessary to play around with these concepts in a novel way.

What an amazing lecture from the inventor of Double Q-learning!

Can I take this course bro?

Planning to start this. I would like to learn RL but I'm Afride that I might take up the wrong course.

Can you suggeste some good courses on RL.

59:50

Classical Q learning: 99% of gamblers quit right before they win big

I wish there was a practical part of those lectures with some coding in Python 😀

Thanks for such wonderful lecture! 6/13

I'm here now!

Does anyone know if there are different versions of the double dqn algorithm. From my point of view there could be a couple of different ways to implement the idea of breaking the overestimation. For example, for updating q you could choose any of the following update rules:

r+\gamma*q'(s`,\argmax_a' q(s',a')) as in the video

r+\gamma*q(s`,\argmax_a' q'(s',a')) choosing the action according to q', but evaluating it by q

cancelation at 1:25:00 seems strange to me. If our policies {mu} and {pi} are choosing different actions (because they're different policies), then wouldn't we get to different A_{t}'s and then not be able to cancel them?

Edit: if anyone else is confused by this we're literally finding the probability of {tau} under {mu} and {pi}, so A_{t} is just A_{t} and we're finding the probability of it occurring, so we can do all the cancelation.

can someone plz explain again the req on alpha in 39:38?

and why 1/t is ok and hold the req

This one is serious stuff..

WHAT DOES THE I NOTATION MEAN? I() like in 16:16

I(TRUE) = 1

I(FALSE) = 0