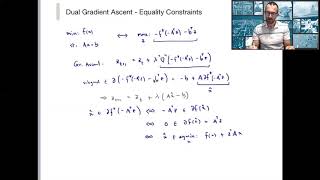

Dual Ascent, Dual Decomposition, and Method of Multipliers

Вставка

- Опубліковано 28 вер 2024

- A very short introduction of dual ascent, dual decomposition, and method of multipliers for optimization. I followed Chapter 2 of Distributed Optimization and Statistical Learning via the Alternating Direction Method of Multipliers by Boyd et al. I planned the screencast in three segments but I pasted them together eventually. I kept on saying that it was mentioned last time but it was actually just mentioned earlier in this video.

Great video. Really appreciate it. But I do have a question. How is alpha^k chosen at each iteration? Or are there various ways to determine the step size?

thank you for this video,I have learn a lot

why do we need to find a y that maximizes the inner term? i.e. why should we maximize the lagrangian multiplier term?

do you mean the beginning? this is a trick to absorb the constraint. When we rewrite the original constrained optimization problem as a min-max problem, the inner optimization (maximizing y) will ensure that the value is infinite (and hence can never be chosen by the outer minimization) when the constraint is violated.

@@ousam2010 yes, makes sense. I did a bit of reading about the Dual Problem. thank you.

Do you have lecture slides of this video?

dope vid