SINDy-RL: Interpretable and Efficient Model-Based Reinforcement Learning

Вставка

- Опубліковано 28 чер 2024

- SINDy-RL: Interpretable and Efficient Model-Based Reinforcement Learning

by Nicholas Zolman, Urban Fasel, J. Nathan Kutz, Steven L. Brunton

arxiv paper: arxiv.org/abs/2403.09110

github code: github.com/nzolman/sindy-rl

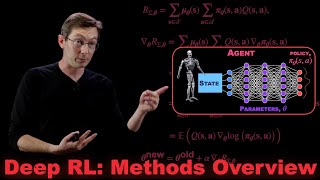

Deep reinforcement learning (DRL) has shown significant promise for uncovering sophisticated control policies that interact in environments with complicated dynamics, such as stabilizing the magnetohydrodynamics of a tokamak fusion reactor or minimizing the drag force exerted on an object in a fluid flow. However, these algorithms require an abundance of training examples and may become prohibitively expensive for many applications. In addition, the reliance on deep neural networks often results in an uninterpretable, black-box policy that may be too computationally expensive to use with certain embedded systems. Recent advances in sparse dictionary learning, such as the sparse identification of nonlinear dynamics (SINDy), have shown promise for creating efficient and interpretable data-driven models in the low-data regime. In this work we introduce SINDy-RL, a unifying framework for combining SINDy and DRL to create efficient, interpretable, and trustworthy representations of the dynamics model, reward function, and control policy. We demonstrate the effectiveness of our approaches on benchmark control environments and challenging fluids problems. SINDy-RL achieves comparable performance to state-of-the-art DRL algorithms using significantly fewer interactions in the environment and results in an interpretable control policy orders of magnitude smaller than a deep neural network policy.

%%% CHAPTERS %%%

00:00 Intro

01:25 What is Reinforcement Learning?

03:12 Reinforcement Learning Drawbacks

05:20 Dictionary Learning and SINDy

06:55 SINDy-RL: Environment

11:42 SINDy-RL: Reward

23:25 SINDy-RL: Agent

14:48 SINDy-RL: Uncertainty Quantification

20:07 Recap and Outro - Наука та технологія

you guys are so brilliant. such a great idea, would love to hear a podcast with you guys talking about how you came up with these ideas/ the life cycle of SINDY rl.

Impressive! Thank you very much for sharing and for the inspiration.

Bold steps ... thrilling work! I look forward to working through the implementation.

Great work. This is fantastic!

Amazing. I've been looking for something like this.

Great presentation, very interesting approach. I’m curious about the intuition behind the ensemble…eager to read more. Thanks!

Thanks Jim! The ensembling gives us way more robustness to noisy data and also to very few data samples, so it can let us train models much more quickly than NN models.

Absolutely brilliant

Has your lab considered experimenting with Kolmogorov-Arnold Networks in combination with SINDy? It feels like a potentially excellent match.

Their approach to network sparsification, in particular, seems like it could be automated in a very interesting way via SINDy. In the recent paper they fix and prune activation functions by hand, but it seems that you could instead use SINDy to automatically fix a particular activation function once it fit a dictionary term beyond some threshold.

Love the presentation!

Neat idea -- definitely thinking about ways of connecting these topics. Thanks!

Gracias por el video!

Thanks for the presentation. Do I understand correctly that this whole process could be automated making highly efficient agents or do some aspects of this process require manual work? Also, how well does it scale to significantly harder RL problems? Does this technique stay computationally efficient (e.g. compared to PPO) in these harder ernvironments? Could this be combined with Reinforcement learning from human feedback (RLHF) in a practical manner?

Great video!

Nick: Excellent work! This is genuine progress in AI to integrate state estimation SOTA with decision making (RL). Would love to see this further refined using POMDPs ( Partially Oberservable Markov Decision Processes).

Checkout PlaNet and dreamer models

curious about how fitting can accelerate the training process. Any assumptions for action space/ state space / environment? Thanks for your attention.

Great!

Amazing

Interesting

This is so amazing I don't have words. Deepmind made computers play go game, chess game. It uses reinforcement learning. It is simply superb.

Dear Sir,

If we want to use reinforcement learning (RL) in a specific environment, I am concerned that the trial-and-error method will result in many errors, some of which may have negative consequences. Furthermore, I am unsure how many attempts the RL model will need to reach the optimal and correct decision. How can this challenge be addressed?

Real AI is RL