VQ-VAE | Everything you need to know about it | Explanation and Implementation

Вставка

- Опубліковано 30 тра 2024

- In this video I go over Vector Quantised Variational Auto Encoder(VQVAE).

Specifically I talk over how its different from VAE, its theory and implementation.

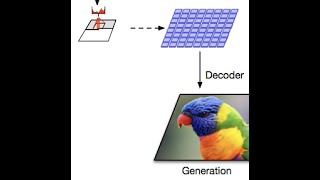

I also train a VQVAE model and show how the generation process looks like after we have trained a VQVAE model.

Timestamps

--------------------

00:00 Intro

00:27 Need and difference from VAE

02:43 VQVAE Components

04:31 Argmin and Straight Through Gradient Estimation

07:11 Codebook and Commitment Loss

08:45 KL Divergence Loss

09:30 Implementation

12:35 Visualization

14:29 Generating Images

16:37 Outro

Paper Link - tinyurl.com/exai-vqvae-paper

Subscribe - tinyurl.com/exai-channel-link

Inspiration of visualization of embedding taken from - • [VQ-VAE] Neural Discre...

Github Repo Link - tinyurl.com/exai-vqvae-repo (Will be updated soon)

Background Track Fruits of Life by Jimena Contreras

Email - explainingai.official@gmail.com

Github Code - github.com/explainingai-code/VQVAE-Pytorch

Thank you, the visuals really helped me understand, especially for the backpropagation part !

Happy that the video was some help to you :)

Great explanation, covering theory and implementation. Nice visualisations. Thanks!

Thank You!

Brilliant video, thanks so much!

You're very welcome!

Very clear explanation! Thanks for the implementation + the vizualisation of the codebook!

Thank you

Dude your videos are absolutely amazing! Thank you!!!

Thanks a lot 🙂

Thanks for the explanation, this is very helpful!!!

Thank You! Am glad that it ended up helping in anyway

Amazing explanantion with easy to follow animations.

Thank you

Thank you for providing insightful perspectives on this topic.

I appreciate your unique perspective and the effort you've put into providing valuable information, rather than simply copying from the paper. Keep up the great work!

Thank you for saying that!

How did code the visualizition?

Thank you for the tutorial. This is by far the best on UA-cam. Keep up please

Thank you!

The visualization is not something I came up by myself, I saw it in a different video(link in description) and I thought it would be much better to explain with that kind of visualization.

This is roughly how I implemented it .

-> Reduce latent dimension as 2 and codebook dimension as 3

-> Bound VQVAE encoder outputs to a range using some activation at final layer of encoder. Say -1 to 1 or 0-1

-> Map both the dimension to some color's intensity value. So maybe x axis is green component(0-1 mapped to 0-255) and y axis is red component and blue is 255 always. Then color each points as (R, G, B) -> (encoded_dimension_1_value*255, encoded_dimension_2_value*255, 255)

-> Train VQVAE and get the codebook vectors for trained model and encoder outputs for an image.

-> Points on plot are encoder outputs for each cell of encoder output feature map and e1,e2,e3 are the codebook vectors.

-> Generate the quantized image using this mapping.

I hope this gives some clarity on the implementation part.

Really nice. Thanks for posting. At 9:52, why are you using nn.Embedding, instead of nn.Parameter(torch,randn((3,2)))? I don't understand where the Embedding comes from.

Thank you! Actually they are both the same. nn.Embedding any way just uses nn.Parameter and normal initialization.

github.com/pytorch/pytorch/blob/d947b9d50011ebd75db2e90d86644a19c4fe6234/torch/nn/modules/sparse.py#L143

So nn.Embedding just creates a wrapper in form of a lookup table to store embeddings of a fixed dictionary and size on top of nn.Parameter. Hope it helps.

Thanks a lot for your video

Can you explain more detail about the :

quant_out = quant_input + (quant_out - quant_input).detach()

Why don't just

quant_out = quant_out.detach()

Hello @linhnhut2134, what we want is the gradients from quant_out to used as if they are they are also the gradients for quant_input, kind of like copy pasting gradients. So ultimately in forward pass we desire to have quant_out = quant_out, but in backward pass what we want is quant_out = quant_input. And the operation "quant_out = quant_input + (quant_out - quant_input).detach()" allows us to achieve that distinction between forward and backward process.

link to code please

Hi @jakula8643, as a result of working on the implementation for the next video, I ended up modifying and making the VQVAE code a bit messy. I will clean it up and have it pushed here github.com/explainingai-code/VQVAE-Pytorch in couple of days time. Apologies for missing this and I will let you know as soon as I do that.

Code is now pushed to the repo mentioned above

This video fuckin' rips.