- 24

- 106 425

Machine Learning Studio

United States

Приєднався 21 чер 2009

I'm Vahid Mirjalili, a Senior Data Scientist with over five years of experience in Multimodal Machine Learning, Computer Vision, NLP, and Deep Learning. I hold two PhDs from Michigan State University, one in Computer Science and another in Mechanical Engineering.

I've worked on cutting-edge projects in industry, leading teams and developing innovative models in object recognition, segmentation, and language processing. I've co-authored best-selling books like Python Machine Learning and Machine Learning with PyTorch and Scikit-Learn, and I'm currently working on "Grokking Transformers".

This channel is where I share insights, techniques, and practical guides on ML, Transformers, and #LLMs. I cover everything from the inner workings of LLMs to practical guides on building Retrieval-Augmented Generation (#RAG) systems and fine-tuning models for various applications. Subscribe to stay ahead in the ML and AI space!

I've worked on cutting-edge projects in industry, leading teams and developing innovative models in object recognition, segmentation, and language processing. I've co-authored best-selling books like Python Machine Learning and Machine Learning with PyTorch and Scikit-Learn, and I'm currently working on "Grokking Transformers".

This channel is where I share insights, techniques, and practical guides on ML, Transformers, and #LLMs. I cover everything from the inner workings of LLMs to practical guides on building Retrieval-Augmented Generation (#RAG) systems and fine-tuning models for various applications. Subscribe to stay ahead in the ML and AI space!

Enhancing LLMs (an overview)

Discover 3 powerful techniques for enhancing Large Language Models (LLMs) in this video! We cover prompt engineering, Retrieval-Augmented Generation (RAG), and fine-tuning, explaining how each method improves the performance and adaptability of LLMs for various tasks. Perfect for AI enthusiasts and developers looking to optimize their models!

#LLM #Promptengineering #RAG #finetuning

#LLM #Promptengineering #RAG #finetuning

Переглядів: 383

Відео

FlashAttention: Accelerate LLM training

Переглядів 8202 місяці тому

In this video, we cover FlashAttention. FlashAttention is an Io-aware attention algorithm that significantly accelerates the training of LLMs.

An Overview of Object Recognition Tasks

Переглядів 2513 місяці тому

This video gives an overview of object recognition tasks, starting with image classification (binary, multi-class and multi-label), then localization and object detection, and finally image segmentation, including semantic, instance segmentation and finally panoptic segmentation. #computervision

Dataset Management with FiftyOne

Переглядів 1383 місяці тому

Link to the toy dataset for this tutorial on GitHub: github.com/PyML-studio/mlstudio/tree/main/Notebooks/fiftyone-dataset-management/data Link to the Jupyter notebook: github.com/PyML-studio/mlstudio/blob/main/Notebooks/fiftyone-dataset-management/fiftyone-tutorials.ipynb

OpenAI CLIP model explained

Переглядів 3,5 тис.4 місяці тому

CLIP: Contrastive Language-Image Pre-training In this video, I describe the CLIP model published by OpenAI. CLIP is based on Natural Language Supervision for pre-training. Natural Language Supervision is not a new, in fact there are two approaches for this, one approach tries to predict the exact caption for each image, whereas the other approach is based on contrastive loss, where instead of p...

DINO -- Self-supervised ViT

Переглядів 5045 місяців тому

In this video, we cover a very exciting paper, called “Emerging Properties in Self-supervised Vision Transformer”. The proposed method DINO (self-distillation with no labels) is a simplified approach for self-supervised learning in vision domain. Similar to self-supervised transformers in NLP, pre-training ViT with DINO also leads to some emerging properties beyond what they were trained for.

Swin Transformer

Переглядів 2,6 тис.6 місяців тому

In this video, we continue the vision transformer series, covering Swin Transformer, a general-purpose transformer backbone for computer vision. Swin Transformer is based on two key ideas: (1) designing a multi-scale hierarchical backbone suitable for computer vision, and (2) a carefully designed Swin Block composed of two window-based attention for efficient self-attention computation, while s...

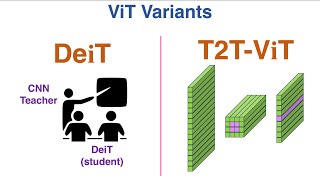

Variants of ViT: DeiT and T2T-ViT

Переглядів 1 тис.7 місяців тому

As you recall from our previous video on ViT, the original ViT needs lots of training data such as JFT-300M. But, if we use a mid-size dataset like ImageNet-1k, the performance of ViT is lower than that of CNNs. In this video, we cover two ViT variants called DeiT (Data Efficient Image Transformers) and Tokens-to-Token ViT (T2T-ViT). Both these models have been able to design vision transformer...

Vision Transformer (ViT)

Переглядів 1,5 тис.8 місяців тому

ViT is a pivotal paper in computer vision, bringing the powers of Transformers to the vision domain, and becoming a fundamental building block of many current vision models. In this video, we delve into the intricate mechanisms of ViT, exploring how this influential model operates. Reference: "An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale", available at arxiv.org/pd...

Evolution of Self-Attention in Vision

Переглядів 1,2 тис.9 місяців тому

In the second installment of this series, the video delves into the advanced world of self-attention in the visual domain. It focuses on three pivotal papers that leverage self-attention mechanisms for image processing. The video builds on the foundation laid by the non-local module discussed in the first video. The spotlight is on attention-augmented convolution and two seminal papers on stand...

Relative Self-Attention Explained

Переглядів 1,3 тис.9 місяців тому

In this video, we dive into a very interesting topic "Relative Self-Attention". First, we will see the differences between relative and absolute position embedding, and then we will cover two algorithms for incorporating relative embedding in self-attention. #transformers #deeplearning

Self-Attention in Image Domain: Non-Local Module

Переглядів 1,2 тис.10 місяців тому

Hi everyone, this is the first video in the vision transformers series, where we will delve into the evolution of self-attention in images, starting with this paper titled "Non-local neural networks".

Introducing a new series on Vision Transformers

Переглядів 80010 місяців тому

Hello Everyone! Welcome to our new video series focused on Vision Transformers. In our previous series, we have extensively covered Transformers for sequences in the domain of Natural Language Processing In this exciting new series, we will explore the vision transformers and approaches for leveraging self-attention in images for tackling computer vision problems.

Linear Complexity in Attention Mechanism: A step-by-step implementation in PyTorch

Переглядів 99710 місяців тому

In our last video, we explored eight distinct algorithms aimed at improving the efficiency of the attention mechanism by minimizing its memory and arithmetic complexity. In this video, we'll be presenting a PyTorch implementation of four of those algorithms that have linear complexity wrt sequence length This demonstration, is intended to help you get familiar with the underlying concepts. Link...

Efficient Self-Attention for Transformers

Переглядів 3,5 тис.11 місяців тому

The memory and computational demands of the original attention mechanism increase quadratically as sequence length grows, rendering it impractical for longer sequences. However, various methods have been developed to streamline the attention mechanism's complexity. In this video, we'll explore some of the most prominent models that address this challenge. #transformers Link to the activation fu...

Variants of Multi-head attention: Multi-query (MQA) and Grouped-query attention (GQA)

Переглядів 7 тис.11 місяців тому

Variants of Multi-head attention: Multi-query (MQA) and Grouped-query attention (GQA)

PostLN, PreLN and ResiDual Transformers

Переглядів 1,9 тис.Рік тому

PostLN, PreLN and ResiDual Transformers

A Dive Into Multihead Attention, Self-Attention and Cross-Attention

Переглядів 29 тис.Рік тому

A Dive Into Multihead Attention, Self-Attention and Cross-Attention

Self-Attention Using Scaled Dot-Product Approach

Переглядів 16 тис.Рік тому

Self-Attention Using Scaled Dot-Product Approach

Matrix Multiplication Concept Explained

Переглядів 4,4 тис.Рік тому

Matrix Multiplication Concept Explained

A Review of 10 Most Popular Activation Functions in Neural Networks

Переглядів 12 тис.Рік тому

A Review of 10 Most Popular Activation Functions in Neural Networks

Your videos are amazing! Clear and well-structured. Are the slides available anywhere?

tunning your audio please

can you suggest good book name to read maths topic about neural networks

thanks , amzing

Is swin transformer applied on classification task?

ممنون بابت مطالب عالی

This is wonderful, thank you. How did you prepare the visuals and diagrams? What tool did you use? This video is exceptional quality

Great presentation.

i want to practice all optimizers with different activation functions with some maths problems and in python could you please suggest good book

159 Leannon Shoal

Great content ❤🎉

fine tunning please more videos on fine tunning

Absolutely! Right after the RAG video, I’ll make a series of fine tuning videos. Thanks for the suggestion. 👍🏻

Cindy Islands

Janelle Square

Miller Cape

0291 Yolanda Viaduct

very nice presentation sir can you share me ppt sir it is useful for me sir

Could you post a video on deit

I have already covered DeIT in this video: Variants of ViT: DeiT and T2T-ViT ua-cam.com/video/h_VwFYDucP8/v-deo.html

@@PyMLstudio An in-depth explanation including flow, formulas and stuff could be helpful, sir

Tabitha View

Retta Creek

Great video series on vit and derivatives, watched all of it. Thank you very much for sharing.

Glad you enjoyed it.

Presley Ford

Purdy Underpass

AdamW and Yogi Optimizer could be considered Universal First order Optimizers. I have experimented on many multi-modal tasks and these two have consistently performed well.

Very nice video. Thank you!

Thanks 🙏🏻

great great 👍💯

Thanks 🙏🏻

Very well explained and while simple, the illustrations are exceptionally clear. Excellent work.

thank you for explaining! very clear! but I'm wondering how do you know WiT dataset is based on 50000 queries and 20000 pairs for each query? I can't find it in the paper.

Thanks for the comment! Please see Page 3, section 2.2: Creating a sufficiently large dataset But it’s 500000 queries, balancing 20000 (Image, text) pairs per query

Great video

Hi, thanks for the amazing video. One question. I get d, q,k,v but didn't get the denotation W.

Thanks, so w is a learnable matrix to get q, k, and v. So to get q, we use q=w_q x, and similarly , for k and v: q=W_q x k=W_k x v=W_v x

@@Lesoleil370 thank you!

I have been looking for that \bar{Q} for hours; I could not realize what "tensor reshaping can be used ..." meant in the original paper. thank you

Thank you for the comment , glad the video was useful for clarifying the concepts

Great

Thanks

Greate explanation, thx :). So the headnumber just tells me how many weight matrices i have for K, Q and V?

may i know how you make these videos?

hi sir please can i have access to the powerpoint?

u save me, thanks and greetings from chile!

Sure, I’m happy that the video was useful

I suggest, you should consider showing your face, you content is awesome and really helpful but for you to grow your channel you must provide the audience a personal touch which in this case is your visible presence. Look, I dont read channel names each time I am looking for content to study, but I have seen that each channel follows a unique way of representing their content, which adds credeblity to the content, If I know you teach well and I see you face in one of the videos realted to the content I am looking for, as a thumbnail. I would jump to it in no time. Thats my opinion but the choice is yours. - A student and Well Wisher.

You are right 👌🏻that would make a lot of difference. I also wanted to work on my channel page but didn’t get a chance to. Thank you for the suggestion 🙏🏻

6:00 Does each attention head only process part of embeded token? Example: Say, there is 100 token and 2 attention heads. Does each head only process 50 tokens. ?? If yes, then how can we make sure each head can understand whole context of sentence, while it only consumes half of sentence?

That’s a great question The multihead attention splits the feature dimension not the sequence dimension. So that way, each head is able to see entire sequence, but working on a smaller feature-size. Example : input is 100 token and each embedding vector is 256 dimensional . Then with 8 heads , each head will process tensors of size 100x16

@@PyMLstudio understood... Great explanation.. Thank you, Bro...

100x16 or 100x32?

thank you for making it so easy to grasp the mathematical concepts!

Glad you found the videos useful

Thanks. Can you please explain the dimensionality "d" means?

Sure, d or d_model refers to the size of the hidden units in each layer. So that’s the size of each embedding vector , as well as the input and output of each layer . The size of query key and values are d/h because multihead attention splits the input of size d by the number of heads.

Please cover FasterViT model too...

Absolutely, I’ll cover that , I have a few other topics lined up, then I’ll get to FasterViT Thanks for the suggestion!

How to get matrix Q, K, and V?

So if we start from the very first step, we tokenize the input sequence , and then we pass this sequence of tokens to an embedded layer. So if we fast track, these embedding reach the attention block as the input so let’s call them tensor X. Now in this attention block, we have 3 learnable matrices Wq, Wk, and Wv, so we multiply each matrix with X and we get Q, K and V respectively.

Wow.. So nice.

what is the difference between self attention and multi head self attention? is both are same just instead of single attention multi head attention use multi heads?

Can you suggest some materials that deal with how transformer can be applied to time series database like EEG ?

Thanks for your concise and insightful description.🙏

Very nice video! I can also imagine that predicting the caption text exactly isn't only more difficult but it would also be more likely result in (more) overfitting if it is learned this way. At 5:43, the pair-wise similarities, they are basically like cross-attention scores?

Yes, in a way, it’s analogous to cross-attention, taking dot-product between the features from the text encoder and image encoder. This dot-product similarity is used as the final output of the model to determine if an image and a text caption are related or not. Good question, thanks for the comment

what would be the advantage of this methods vs Flash attention. Flash attention speeds up the computation and it is an exact computation most of these methods are approximations. I would like if possible to see a video explaining other attention types as Paged attention and Flash Attention. Great content :)

Thank you for the suggestion! You're absolutely right. In this video, I focused on purely algorithmic approaches, not hardware-based solutions like FlashAttention. FlashAttention is an IO-aware exact attention algorithm that uses tiling to reduce memory reads/writes between GPU memory levels, which results in significant speedup without sacrificing model quality. I appreciate your input and will definitely consider making a video to explain FlashAttention!

Thanks for the suggestion, I made a new video on Flash Attention: FlashAttention: Accelerate LLM training ua-cam.com/video/LKwyHWYEIMQ/v-deo.html I would love to hear your comments and if you have any other suggestions

you left out step and sine :D