- 20

- 198 271

TheDataPost

United States

Приєднався 26 чер 2019

Feature Scaling

Explanation of feature scaling clearly explained.

Image Links:

towardsdatascience.com/gradient-descent-algorithm-and-its-variants-10f652806a3

mccormickml.com/2013/08/15/the-gaussian-kernel/

Image Links:

towardsdatascience.com/gradient-descent-algorithm-and-its-variants-10f652806a3

mccormickml.com/2013/08/15/the-gaussian-kernel/

Переглядів: 9 557

Відео

Linear Regression Part II

Переглядів 8404 роки тому

A continued explanation of linear regression. Links: www.quora.com/How-is-it-determined-if-a-slope-is-positive-negative-or-undefined

Overfitting

Переглядів 5884 роки тому

An explanation of the data science concept overfitting. Links: www.geeksforgeeks.org/underfitting-and-overfitting-in-machine-learning/ www.kaggle.com/learn-forum/61822

Splitting Data

Переглядів 1,1 тис.4 роки тому

An explanation of what splitting data is and why it is necessary. Links: www.commonlounge.com/discussion/5f6c903d4821416b9b2ad2e4b2950250/history www.kaggle.com/learn-forum/61822

Classification vs. Regression

Переглядів 11 тис.4 роки тому

Simple explanation of classification and regression.

Supervised vs. Unsupervised Learning

Переглядів 4,6 тис.4 роки тому

A simple explanation of the differences between supervised and unsupervised learning.

Continuous vs. Discrete Values

Переглядів 9754 роки тому

Explanation of the differences between continuous and discrete values.

What is Machine Learning?

Переглядів 3 тис.4 роки тому

A quick and simple explanation of what machine learning is. Links: giphy.com/gifs/imadeit-qKltgF7Aw515K www.geeksforgeeks.org/clustering-in-machine-learning/

Random Forests Explanation and Visualization

Переглядів 12 тис.4 роки тому

explanation of random forests clearly explained

Bias Variance Tradeoff

Переглядів 3,2 тис.4 роки тому

A core machine learning concept known as bias variance tradeoff clearly explained. Links: scott.fortmann-roe.com/docs/BiasVariance.html www.geeksforgeeks.org/underfitting-and-overfitting-in-machine-learning/

DBSCAN Advantages and Disadvantages

Переглядів 2,8 тис.4 роки тому

analysis of the strengths and weaknesses of the dbscan algorithm

K-Means Implementation and Parameter Tuning

Переглядів 8 тис.4 роки тому

K-Means Implementation and Parameter Tuning

K-Means Clustering Explanation and Visualization

Переглядів 74 тис.4 роки тому

K-Means Clustering Explanation and Visualization

DBSCAN Implementation and Parameter Tuning

Переглядів 9 тис.4 роки тому

DBSCAN Implementation and Parameter Tuning

DBSCAN Explanation and Visualization

Переглядів 41 тис.4 роки тому

DBSCAN Explanation and Visualization

K-Means Advantages and Disadvantages

Переглядів 4 тис.4 роки тому

K-Means Advantages and Disadvantages

That was a really nice explanation, though I wonder if The Algorithm might deboost this video based on what you said around 0:31...

Concise and Clear!

phenomal explanation. after watching around a dozen of videos and not being sure what the difference is, you made it so simple in just 2 minutes. excellent job

This is the easiest explanation to understand.

great explanation..thanks

exeptional explanation!

Simplest and to the point explanation Thank You

Amazing video needed. this for my data mining course

Wow, best explanation Ive seen

Awesome video man!

Holy moly! i'm so glad i found this channel! i'm bulk watching every video

Im glad I found this. Easy to understand. Thank you

@TheDataPost Which tool/software do you use for the visualization?

great vid

the best and easy explanation ever, good job

thanks

Perfectly explained, thanks!

Very good video

great vid. Thank you

Thanks

you could use more of the actual terminology, like fit and predict phases, but overall congrats very weel and concise video

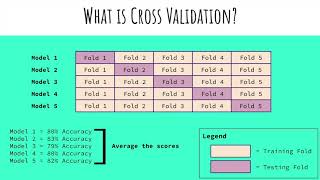

This video referred to one aspect many of the videos about the same subject do not: You get one model per fold (one set of "fitted" parameters, one "RMSE" if that is what you are using to evaluate that model, one set of predicted features, etc), not one final model so, as the author said, you use it to evaluate how a certain type of model can perform by averaging the total of models (averaging the statistic you are using to evaluate how good is the model) you get when doing one model training per fold, NOT to find the final model parameters. It is almost never mentioned this tiny detail. And I see many people, like myself, wondering what the end result of this method is and its usage.

Thanks for the video! In the 2:30 part of the video, I would like to know what software did you use to create this animation effect?

Very much appreciated. Explained quickly and clearly

Quick and effective. Great video

Thanks 👍

Initial centroids are based on points already in the dataset, not selecting them randomly like he did in the beginning.

Excellent tutorial, appreciate your efforts

Best explanation!

amazing

very cool

Vey clear, thank you

That's a clear explanation, thanks alot

I love yoy, thanks

Now i understand. Thanks

@0:27 The strip function seems to not work for some reason. It says " tuple' object has no attribute 'strip' "

Thank you thank you.. I was puzzled with how do we decide which model to adopt until I saw this video.

Thanks for this! How to use the number of clusters if you don't know beforehand?

A gaussian mixed model can be used to estimate the number of clusters

Or you can use agglomerative clustering where the number of clusters will be equal to the number of observations

You can use methods such as "The Elbow Method" to estimate the correct number of clusters for each dataset. What it does is it gets the WSS for each cluster and it selects the number of cluster where the WSS presents diminishing returns. But can you always find more info online. Good luck!

This video is proof there are only 2 genders out there! Take that woke people

straight to the point, thanks.

Best explanation I have seen soooo farr 👏

very nice! thank you!

Very intuitively explained! kudos!

short and clear.Thank you

And this was how AI took the world.

This is a very basic algorithm. I don't this is the world ending AI we need to be concerned about. Now, reinforcement learning networks are potentially dangerous.

does it mean it has to visulise the points, and then may select initial centroids?

great

Nice

amazing simulation in the end

Great explanation. You made it simple and concise.